These days, Artificial Intelligence is changing the way managers work: from how they plan, make decisions, or even deal with teams and customers. It’s not just about automation anymore; AI has become part of everyday business, helping with both small tasks and big-picture strategies. As a result, understanding ethics and responsibility is essential.

Future leaders must be equipped not just with tools, but also with judgement. For management students, this means learning how to question AI use and ensure that it’s applied responsibly across business functions. This is why even MBA in financial management colleges are now integrating AI modules into their core frameworks.

What Is AI Ethics in Business?

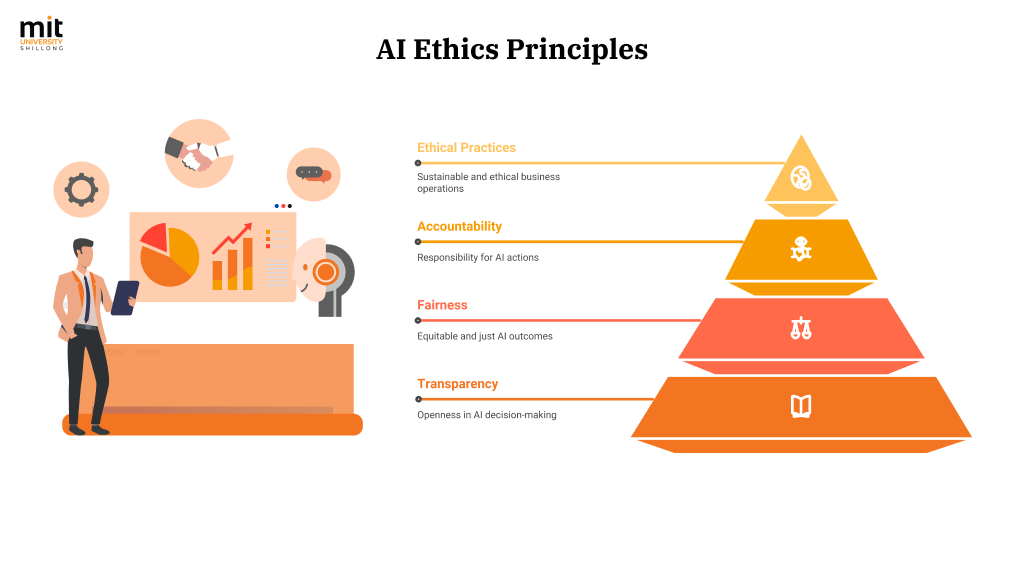

AI ethics is about ensuring that artificial intelligence is used in ways that are transparent, fair, and accountable. In business, this becomes especially important because AI tools often make or support decisions that affect people, such as hiring, loan approvals, performance scoring, or even customer pricing.

Key risks include:

- Bias in data

- Lack of transparency (black-box models)

- Absence of accountability

- Poor oversight of automated decisions

To address these risks, businesses are adopting structured approaches like responsible AI in business and formal AI governance for managers. These frameworks aim to introduce controls and policies so that AI tools align with human values and legal standards.

Why Management Students Must Learn AI Responsibility

Most organisations now expect managers, not just data scientists, to help shape AI strategy. This includes reviewing how AI is deployed, identifying ethical concerns, and maintaining compliance with data and workplace regulations.

This is why many B-schools have begun incorporating AI ethics for students into their core or elective curricula. Learning this early trains students to think critically about how algorithms influence business outcomes. It also builds awareness of the reputational risks companies face when AI decisions go unchecked.

Case Studies and Real Examples

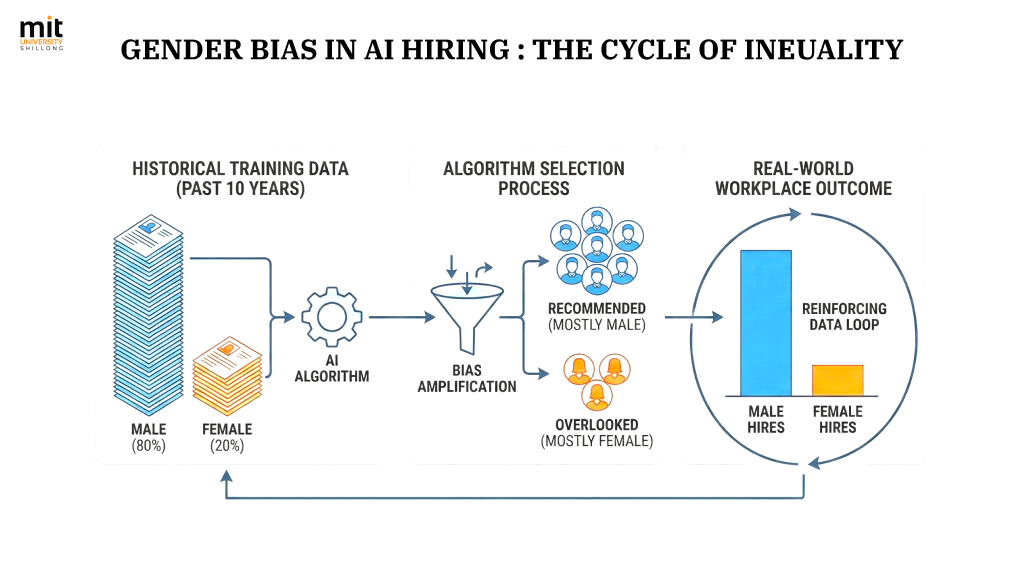

In 2018, Amazon discontinued an AI‑driven recruiting engine after discovering it was biased against women.

- The tool was trained on resumes submitted to the company over the previous decade, which had a majority of male applicants; as a result, the model learned to favour male‑sounding candidates.

- Amazon’s engineers found that the system penalised resumes that included the word “women’s” (as in “women’s chess club captain”) and downgraded graduates of all‑women’s colleges.

- The company stopped using the tool for technical roles and shifted to redesigning its processes under human review.

Take‑away lessons:

- Any AI hiring tool needs a rigorous bias audit before full deployment.

- Historical data can reinforce past inequalities if not adjusted.

- Human oversight remains essential even when using advanced systems.

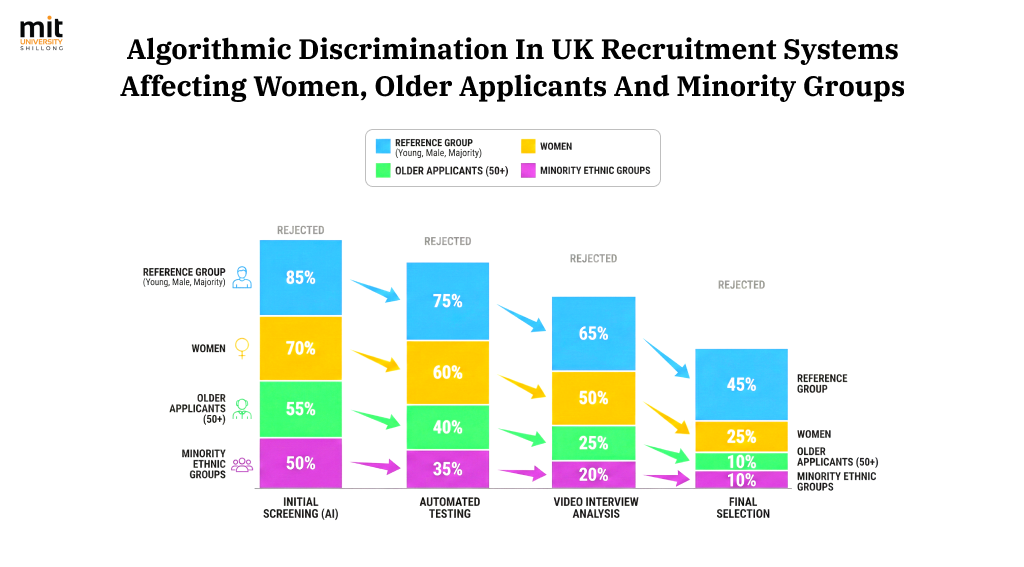

An analysis published in 2023 showed that algorithmic hiring systems used by UK employers caused discrimination against women, older applicants, and minority groups.

- One university‑led report found a serious risk of discrimination when AI systems screened candidates.

- This example highlights the need for transparent scoring, auditable decisions, and stakeholder accountability.

Take‑away lessons:

- AI doesn’t eliminate bias just by being “automated”.

- Managers must ensure fairness, especially in high‑stakes decisions like hiring.

- Ethical governance frameworks should include metrics, audit logs, and review loops.

Principles of Responsible AI Governance

Content Structure:

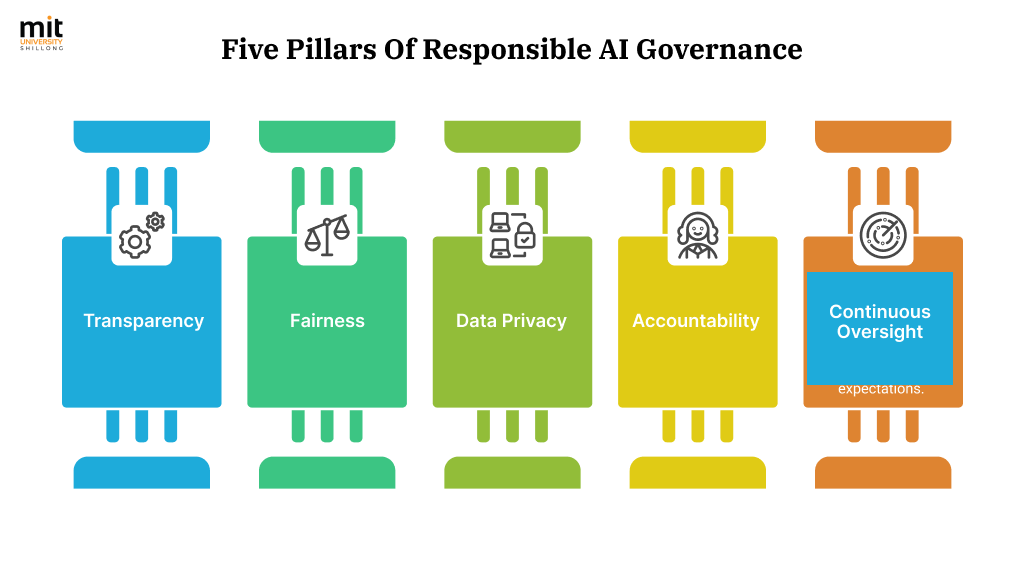

Pillar 1: TRANSPARENCY

- Principle: Make AI intent and limitations clear

- Implementation:

- Document decision logic

- Explain model predictions

- Communicate to stakeholders

- Business Example: Loan denial explanations

- Metrics: User understanding scores

Pillar 2: FAIRNESS

- Principle: Test for bias regularly

- Implementation:

- Demographic parity checks

- Equal opportunity testing

- Calibration across groups

- Business Example: Recruitment screening balance

- Metrics: Disparity ratios, fairness indices

Pillar 3: DATA PRIVACY

- Principle: Collect only necessary data, store securely

- Implementation:

- Data minimization

- Encryption protocols

- Access controls

- Business Example: Customer data protection

- Metrics: Compliance rates, breach incidents

Pillar 4: ACCOUNTABILITY

- Principle: Assign responsibility for outcomes

- Implementation:

- Clear ownership structure

- Decision audit trails

- Escalation protocols

- Business Example: Model performance reviews

- Metrics: Response times, resolution rates

Pillar 5: CONTINUOUS OVERSIGHT

- Principle: Review frequently, not once

- Implementation:

- Regular model monitoring

- Performance drift detection

- Stakeholder feedback integration

- Business Example: Quarterly ethics audits

- Metrics: Audit frequency, issue detection rates

Visual Style: Classical pillar architecture design with MIT University brand colors, each pillar distinct but connected through foundation base

- Transparency – Make AI intent and limitations clear to users

- Fairness – Regularly test for bias across demographic and behavioural groups

- Data Privacy – Collect only necessary data and store it securely

- Accountability – Assign responsibility for model performance and outcomes

- Continuous Oversight – Review tools and decisions frequently, not once

Understanding these rules prepares students to handle AI‑related decisions in fundamental roles. These are now being taught alongside policy modules in top institutes offering MBA in Shillong.

Shape a Future Where Ethics Leads Innovation

Info Alt Tag: Comparative timeline infographic displaying MBA curriculum transformation from 2015 (traditional focus on finance, marketing, operations with minimal technology) to 2025 (integrated approach combining financial management with AI ethics, algorithmic fairness, data governance, responsible technology deployment, and case-based learning on real AI failures and successes)

Courses on AI in business are becoming as essential as those in finance or operations. Many MBA in financial management colleges are now integrating AI policy and data ethics into their programmes. This ensures that the next generation of managers understands both the risks and rewards of AI tools.

Whether you’re looking into sustainability, leadership, or the best MBA finance programs, it’s clear that responsible technology use will be a big part of your future.